Evolutionary Multi-Objective Optimization (EMOO)

Evolutionary optimization is an established tool to

explore complex parameter spaces using strategies from biological

evolution to select, modify and breed new models. Classically the

quality of a model is determined based on a single distance

function. But if multiple properties of a model are to be optimized

a rather arbitrary weighting of these properties is required to

create a single distance function again .

.

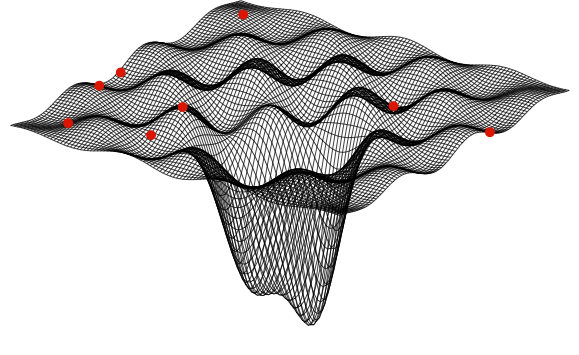

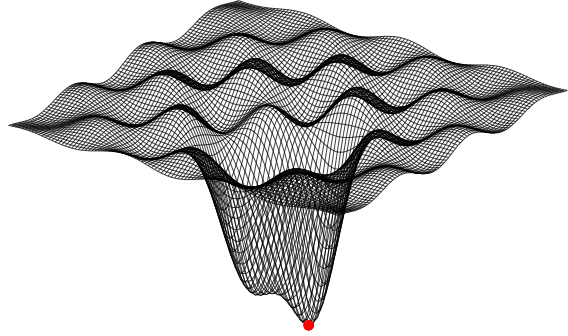

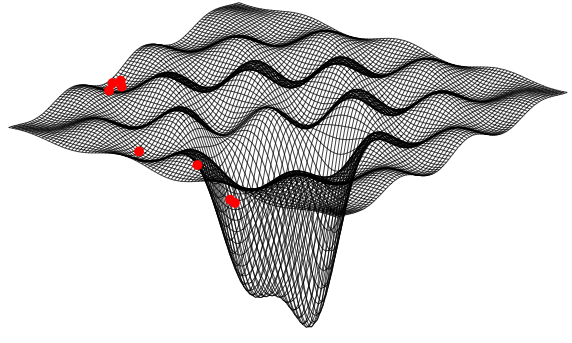

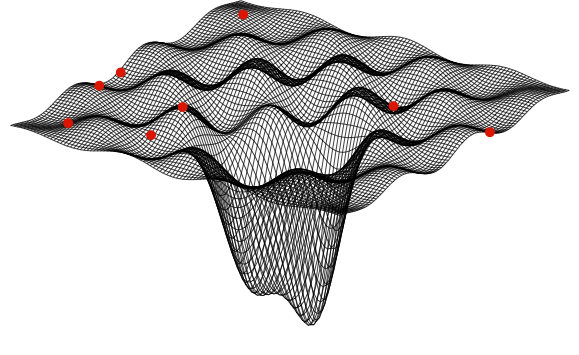

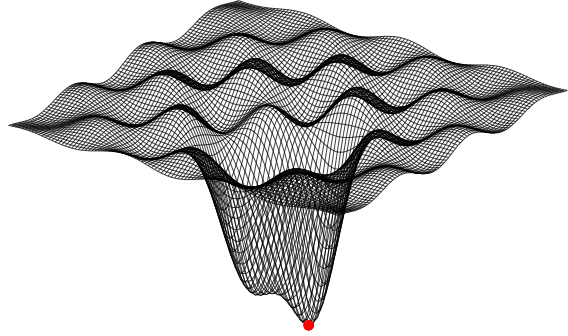

Multi-objective optimization strategies instead optimize multiple

and possibly confliciting distance functions at the same time which

allows to target noisy data. This powerful optimization method is

therefore well suited for optimizing multi-compartmental neurons

models to experimental data and has already been successfully used

in recent modelling studies in neuroscience (Druckmann et al. 2007;

Druckmann et al. 2008; Bahl 2009; Hay et al. 2011; Bahl et al.

2012).

Here I provide this optimization framework which I have used to

optimize a 17 parameter model of a layer 5 pyramidal neuron to

experimental data. It is entirely written in Python and uses the

Message Passing Interface (MPI) for communication between different

processors and nodes.

Please contact me if you have comments, improvements or questions.

Enjoy your optimization!

Armin Bahl

Requirements

- I strongly recommend to read the book

by Kalyanmoy Deb

- Python

- To get the full power of running MPI on a cluster, you should

install a job scheduling engine like Torque.

Setup

- Download and extract emoo_1_0.zip

- Copy the folder emoo to your Python-library path (e.g.

site-packages), or add the path to the folder containing emoo to

your python path.

- This should be all; emoo can be imported as a Python-module

now.

General Usage

- I have tested emoo on my MacBook Pro (OSX Lion) and under

Ubuntu Linux, but it should also work under windows

.

.

- emoo can be imported as a standard python module, it should

check itself whether mpi4py is properly installed.

- running your script on a single core without using mpi:

- <pythonbinary>

example1.py

- running directly on multiple processors using MPI:

- mpirun -np 2

<pythonbinary> example1.py

- put in the number of processors (-np x) you would like to

use

- using the job scheduling engine

Examples

- Example 1: Minimize a

single distance function with three parameters

- Example 2: Minimize two

conflicting distance functions with two parameters

- Example 3: An application

to neuroscience: Find ion channel densities to tune a neuron

model.

contact:

- bahl "an at here" neuro "a dot here" mpg "another dot here" de

References

Deb, K. (2001). Multi-objective optimization using evolutionary

algorithms. New York, NY, USA: John Wiley & Sons, Inc.

Druckmann, S., Banitt, Y., Gidon, A., Schürmann, F., Markram,

H., & Segev, I. (2007). A novel multiple objective optimization

framework for constraining conductance-based neuron models by

experimental data. Frontiers in Neuroscience, 1(1), 7–18.

Druckmann, S., Berger, T. K., Hill, S., Schürmann, F., Markram,

H., & Segev, I. (2008). Evaluating automated parameter

constraining procedures of neuron models by experimental and

surrogate data. Biological Cybernetics, 99(4-5), 371–379.

Bahl A (2009). Automated optimization of a reduced layer 5 pyramidal

cell model based on experimental data. Diplomarbeit (HU-Berlin)

Bahl A, Stemmler MB, Herz AVM, Roth A. (2012). Automated

optimization of a reduced layer 5 pyramidal cell model based on

experimental data. J Neurosci Methods.

Automated optimization of a reduced layer 5 pyramidal cell

model based on experimental data

.

. .

.